零基础 Object-C 学习路线推荐 : Object-C 学习目录 >> Object-C 基础

零基础 Object-C 学习路线推荐 : Object-C 学习目录 >> Object-C 线程

零基础 Object-C 学习路线推荐 : Object-C 学习目录 >> OpenGL ES

零基础 Object-C 学习路线推荐 : Object-C 学习目录 >> GPUImage

零基础 Object-C 学习路线推荐 : Object-C 学习目录 >> AVFoundation

零基础 Object-C 学习路线推荐 : Object-C 学习目录 >> CocoaPods

一.前言

1.AVAsset

Assets 可以来自一个文件或用户的相册,可以理解为多媒体资源,通过 URL 作为一个 asset 对象的标识. 这个 URL 可以是本地文件路径或网络流;

2.AVAssetTrack

AVAsset 包含很多轨道 AVAssetTrack的结合,如 audio, video, text, closed captions, subtitles…

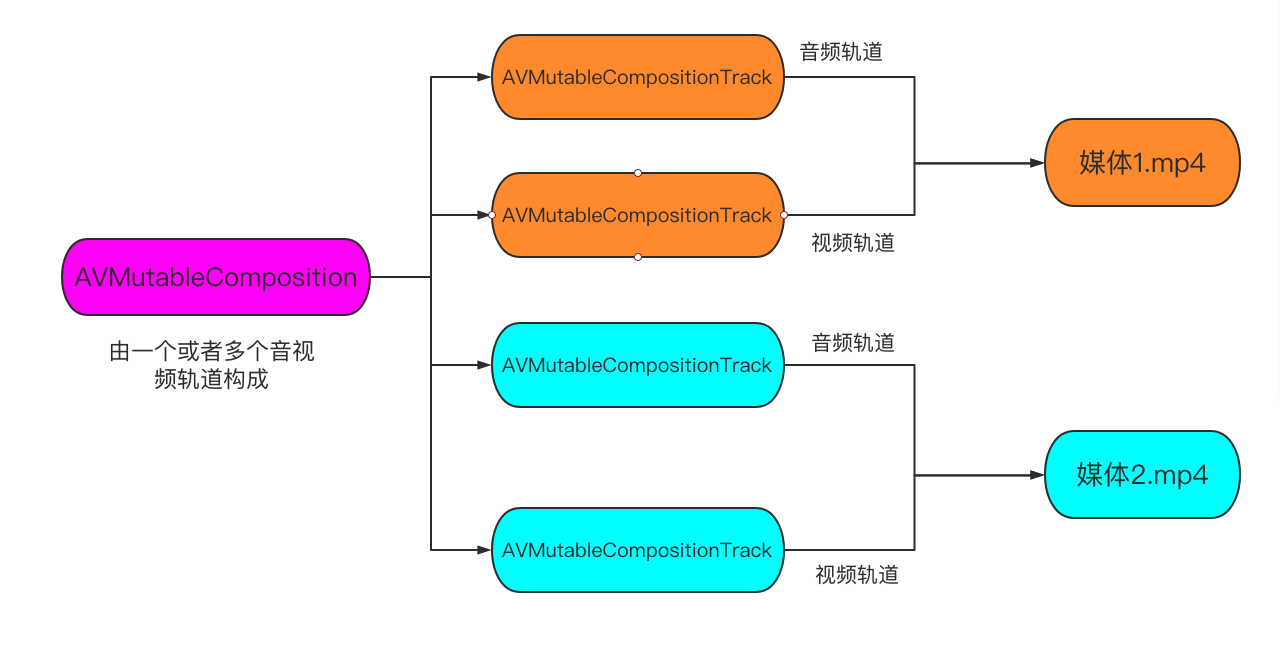

3.AVComposition / AVMutableComposition

使用 AVMutableComposition 类可以增删 AVAsset 来将单个或者多个 AVAsset 集合到一起,用来合成新视频。除此之外,若想将集合到一起的视听资源以自定义的方式进行播放,需要使用 AVMutableAudioMix 和 AVMutableVideoComposition 类对其中的资源进行协调管理;

4.AVMutableVideoComposition

AVFoundation 类 API 中最核心的类是 AVVideoComposition / AVMutableVideoComposition 。

AVVideoComposition / AVMutableVideoComposition 对两个或多个视频轨道组合在一起的方法给出了一个总体描述。它由一组时间范围和描述组合行为的介绍内容组成。这些信息出现在组合资源内的任意时间点。

AVVideoComposition / AVMutableVideoComposition 管理所有视频轨道,可以决定最终视频的尺寸,裁剪需要在这里进行;

5.AVMutableCompositionTrack

AVMutableCompositionTrack 是将多个 AVAsset 集合到一起合成新视频中轨道信息,有音频轨、视频轨等,里面可以插入各种对应的素材(画中画,水印等);

6.AVMutableVideoCompositionLayerInstruction

AVMutableVideoCompositionLayerInstruction 主要用于对视频轨道中的一个视频处理缩放、模糊、裁剪、旋转等;

7.AVMutableVideoCompositionInstruction

表示一个指令,决定一个 timeRange 内每个轨道的状态,每一个指令包含多个 AVMutableVideoCompositionLayerInstruction ;而 AVVideoComposition 由多个 AVVideoCompositionInstruction 构成;

AVVideoCompositionInstruction 所提供的最关键的一段数据是组合对象时间轴内的时间范围信息。这一时间范围是在某一组合形式出现时的时间范围。要执行的组全特质是通过其 AVMutableVideoCompositionLayerInstruction 集合定义的。

8.AVAssetExportSession

AVAssetExportSession 主要用于导出视频;

9.AVAssetTrackSegment

AVAssetTrackSegment 不可变轨道片段;

10.AVCompositionTrackSegment

AVCompositionTrackSegment 可变轨道片段,继承自 AVAssetTrackSegment;

二.多个视频合并流程简介

AVComposition 继承自 AVAsset,将来自多个基于源文件的媒体数据组合在一起显示,或处理来自多个源媒体数据;

AVMutableComposition *mutableComposition = [AVMutableComposition composition];

//进行添加资源等操作

//1.添加媒体1的视频轨道

//2.添加媒体1的音频轨道

//3.添加媒体2的视频轨道

//4.添加媒体2的音频轨道

//.....

//使用可变的 composition 生成一个不可变的 composition 以供使用

AVComposition *composition = [myMutableComposition copy];

AVPlayerItem *playerItem = [[AVPlayerItem alloc] initWithAsset:composition];三.多个视频合并设置转场流程

![图片[2]-AVFoundation – 将多个媒体合并(五) – 多个视频之间设置转场溶解过渡效果-猿说编程](https://www.codersrc.com/wp-content/uploads/2021/09/42ed37b390f90c0.png)

![图片[3]-AVFoundation – 将多个媒体合并(五) – 多个视频之间设置转场溶解过渡效果-猿说编程](https://www.codersrc.com/wp-content/uploads/2021/09/96e79218965eb72.png)

完整实例代码如下:

/******************************************************************************************/

//@Author:猿说编程

//@Blog(个人博客地址): www.codersrc.com

//@File:AVFoundation – 将多个媒体合并(五) – 多个视频之间播放结束和开始存在过渡

//@Time:2021/09/12 07:30

//@Motto:不积跬步无以至千里,不积小流无以成江海,程序人生的精彩需要坚持不懈地积累!

/******************************************************************************************/

#import "ViewController.h"

#import <AVFoundation/AVFoundation.h>

#import <AVKit/AVKit.h>

-(AVComposition*)configurationComposition

{

AVMutableComposition* composition = [AVMutableComposition composition];

AVMutableCompositionTrack* videoTrack1 = [composition addMutableTrackWithMediaType:AVMediaTypeVideo preferredTrackID:kCMPersistentTrackID_Invalid];

AVMutableCompositionTrack* videoTrack2 = [composition addMutableTrackWithMediaType:AVMediaTypeVideo preferredTrackID:kCMPersistentTrackID_Invalid];

AVAsset* asset1 = [_assets objectAtIndex:0];

AVAssetTrack* assetTrack1 = [[asset1 tracksWithMediaType:AVMediaTypeVideo] objectAtIndex:0];

AVAsset* asset2 = [_assets objectAtIndex:1];

AVAssetTrack* assetTrack2 = [[asset2 tracksWithMediaType:AVMediaTypeVideo] objectAtIndex:0];

CMTime cursorTime = kCMTimeZero;

CMTime transTime = CMTimeMake(1.0, 1.0);

[videoTrack1 insertTimeRange:CMTimeRangeMake(kCMTimeZero, assetTrack1.asset.duration) ofTrack:assetTrack1 atTime:cursorTime error:nil];

cursorTime = CMTimeAdd(cursorTime, assetTrack1.asset.duration);

cursorTime = CMTimeSubtract(cursorTime, transTime);

[videoTrack2 insertTimeRange:CMTimeRangeMake(kCMTimeZero, assetTrack2.asset.duration) ofTrack:assetTrack2 atTime:cursorTime error:nil];

cursorTime = CMTimeAdd(cursorTime, assetTrack2.asset.duration);

cursorTime = CMTimeSubtract(cursorTime, transTime);

[videoTrack1 insertTimeRange:CMTimeRangeMake(kCMTimeZero, assetTrack1.asset.duration) ofTrack:assetTrack1 atTime:cursorTime error:nil];

if(!_videoTracks)

_videoTracks = [NSMutableArray array];

[_videoTracks addObject:videoTrack1];

[_videoTracks addObject:videoTrack2];

return composition;

}

-(AVVideoComposition*)videoCompositionWithAsset:(AVAsset*)asset

{

CMTime cursorTime = kCMTimeZero;

CMTime transTime = CMTimeMake(1.0, 1.0);

//输出对象 会影响分辨率

AVAssetExportSession* exporter = [[AVAssetExportSession alloc] initWithAsset:asset presetName:AVAssetExportPresetHighestQuality];

AVMutableVideoComposition* videoComposition = [AVMutableVideoComposition videoCompositionWithPropertiesOfAsset:asset];

NSMutableArray* passThrouTime = [NSMutableArray array];

NSMutableArray* transitionTime = [NSMutableArray array];

NSArray* tracks = [asset tracksWithMediaType:AVMediaTypeVideo];

AVAsset* asset1 = [_assets objectAtIndex:0];

AVAssetTrack* assetTrack1 = [[asset1 tracksWithMediaType:AVMediaTypeVideo] objectAtIndex:0];

AVAsset* asset2 = [_assets objectAtIndex:1];

AVAssetTrack* assetTrack2 = [[asset2 tracksWithMediaType:AVMediaTypeVideo] objectAtIndex:0];

//计算转场时间

NSArray* oriTracks = @[assetTrack1,assetTrack2];

for (int i = 0; i < 3; i++) {

AVAssetTrack* curTack = [oriTracks objectAtIndex:i%2];

if (i == 0) {

[passThrouTime addObject:[NSValue valueWithCMTimeRange:CMTimeRangeMake(kCMTimeZero, CMTimeSubtract(curTack.asset.duration, transTime))]];

cursorTime = CMTimeAdd(cursorTime, CMTimeSubtract(curTack.asset.duration, transTime));

}

else

{

if(i+1<3)

[passThrouTime addObject:[NSValue valueWithCMTimeRange:CMTimeRangeMake(cursorTime, CMTimeSubtract(CMTimeSubtract(curTack.asset.duration, transTime), transTime))]];

else

[passThrouTime addObject:[NSValue valueWithCMTimeRange:CMTimeRangeMake(cursorTime, CMTimeSubtract(curTack.asset.duration, transTime))]];

cursorTime = CMTimeAdd(cursorTime, curTack.asset.duration);

cursorTime = CMTimeSubtract(cursorTime, transTime);

cursorTime = CMTimeSubtract(cursorTime, transTime);

}

if (i + 1 < 3) {

[transitionTime addObject:[NSValue valueWithCMTimeRange:CMTimeRangeMake(cursorTime, transTime)]];

cursorTime = CMTimeAdd(cursorTime, transTime);

}

}

//操作指令 - 溶解

NSMutableArray* instructions = [NSMutableArray array];

for (int i = 0; i < passThrouTime.count; i++) {

AVMutableVideoCompositionInstruction* videoCompositionInstruction = [AVMutableVideoCompositionInstruction videoCompositionInstruction];

videoCompositionInstruction.timeRange = [[passThrouTime objectAtIndex:i] CMTimeRangeValue];

AVMutableVideoCompositionLayerInstruction* layerInstruction = [AVMutableVideoCompositionLayerInstruction videoCompositionLayerInstructionWithAssetTrack:[tracks objectAtIndex:i%2]];

videoCompositionInstruction.layerInstructions = @[layerInstruction];

[instructions addObject:videoCompositionInstruction];

if (i < transitionTime.count) {

AVMutableVideoCompositionInstruction* transCompositionInstruction = [AVMutableVideoCompositionInstruction videoCompositionInstruction];

transCompositionInstruction.timeRange = [[transitionTime objectAtIndex:i] CMTimeRangeValue];

//第一个媒体透明度重 1.0 到 0.0 逐渐消失

AVMutableVideoCompositionLayerInstruction* fromLayerInstruction = [AVMutableVideoCompositionLayerInstruction videoCompositionLayerInstructionWithAssetTrack:tracks[i%2]];

[fromLayerInstruction setOpacityRampFromStartOpacity:1.0 toEndOpacity:0.0 timeRange:[[transitionTime objectAtIndex:i] CMTimeRangeValue]];

//第二个媒体透明度重 0.0 到 1.0 逐渐显示

AVMutableVideoCompositionLayerInstruction* toLayerInstruction = [AVMutableVideoCompositionLayerInstruction videoCompositionLayerInstructionWithAssetTrack:tracks[1-i%2]];

[toLayerInstruction setOpacityRampFromStartOpacity:0.0 toEndOpacity:1.0 timeRange:[[transitionTime objectAtIndex:i] CMTimeRangeValue]];

transCompositionInstruction.layerInstructions = @[fromLayerInstruction,toLayerInstruction];

[instructions addObject:transCompositionInstruction];

}

}

videoComposition.instructions = instructions;

//获取分辨率

CGSize renderSize = [self getNaturalSize:tracks[0]];

//设置分辨率

videoComposition.renderSize = renderSize;

//设置视频帧率

videoComposition.frameDuration = _videoTracks[0].minFrameDuration;

videoComposition.renderScale = 1.0;

NSString* outPath = [NSString stringWithFormat:@"%@/cache.mp4",[self dirDoc]];

[[NSFileManager defaultManager] removeItemAtPath:outPath error:nil];

//exporter设置

exporter.outputURL = [NSURL fileURLWithPath:outPath];

exporter.outputFileType = AVFileTypeQuickTimeMovie;

exporter.shouldOptimizeForNetworkUse = YES;//适合网络传输

exporter.videoComposition = videoComposition;

[exporter exportAsynchronouslyWithCompletionHandler:^{

dispatch_async(dispatch_get_main_queue(), ^{

if (exporter.status == AVAssetExportSessionStatusCompleted) {

NSLog(@"成功 : %@",outPath);

//播放

[self playVideoWithUrl:[NSURL fileURLWithPath:outPath]];

}else{

NSLog(@"失败--%@",exporter.error);

}

});

}];

return [videoComposition copy];

}

-(void)exportVideo

{

AVComposition* composotion = [self configurationComposition];

[self videoCompositionWithAsset:composotion];

}

- (void)viewDidLoad {

[super viewDidLoad];

AVAsset* asset1 = [AVAsset assetWithURL:[[NSBundle mainBundle] URLForResource:@"1.mp4" withExtension:nil]];

AVAsset* asset2 = [AVAsset assetWithURL:[[NSBundle mainBundle] URLForResource:@"2.mp4" withExtension:nil]];

_assets = @[asset1,asset2];

//异步加载媒体

NSArray* keys = @[@"duration"];

[asset1 loadValuesAsynchronouslyForKeys:keys completionHandler:^{

[asset2 loadValuesAsynchronouslyForKeys:keys completionHandler:^{

dispatch_async(dispatch_get_main_queue(), ^{

[self exportVideo];

});

}];

}];

}

- (CGSize)getNaturalSize:(AVAssetTrack*)track{

UIImageOrientation assetOrientation = UIImageOrientationUp;

BOOL isPortrait = NO;

CGAffineTransform videoTransform = track.preferredTransform;

if (videoTransform.a == 0 && videoTransform.b == 1.0 && videoTransform.c == -1.0 && videoTransform.d == 0) {

assetOrientation = UIImageOrientationRight;

isPortrait = YES;

}

if (videoTransform.a == 0 && videoTransform.b == -1.0 && videoTransform.c == 1.0 && videoTransform.d == 0) {

assetOrientation = UIImageOrientationLeft;

isPortrait = YES;

}

if (videoTransform.a == 1.0 && videoTransform.b == 0 && videoTransform.c == 0 && videoTransform.d == 1.0) {

assetOrientation = UIImageOrientationUp;

}

if (videoTransform.a == -1.0 && videoTransform.b == 0 && videoTransform.c == 0 && videoTransform.d == -1.0) {

assetOrientation = UIImageOrientationDown;

}

//根据视频中的naturalSize及获取到的视频旋转角度是否是竖屏来决定输出的视频图层的横竖屏

CGSize naturalSize;

if(assetOrientation){

naturalSize = CGSizeMake(track.naturalSize.height, track.naturalSize.width);

} else {

naturalSize = track.naturalSize;

}

return naturalSize;

}

-(void)playVideoWithUrl:(NSURL *)url{

AVPlayerViewController *playerViewController = [[AVPlayerViewController alloc]init];

playerViewController.player = [[AVPlayer alloc]initWithURL:url];

playerViewController.view.frame = self.view.frame;

playerViewController.view.layer.backgroundColor = [UIColor redColor].CGColor;

[playerViewController.player play];

[self presentViewController:playerViewController animated:YES completion:nil];

}

//获取Documents目录

-(NSString *)dirDoc{

return [NSSearchPathForDirectoriesInDomains(NSDocumentDirectory, NSUserDomainMask, YES) firstObject];

}

@end

链接: 提取码: 复制这段内容后打开百度网盘手机App,操作更方便哦

导出视频如下:

![图片[4]-AVFoundation – 将多个媒体合并(五) – 多个视频之间设置转场溶解过渡效果-猿说编程](https://www.codersrc.com/wp-content/uploads/2021/09/bae5e3208a3c700.gif)

温馨提示:上面工程源码可通过网站右上角《立即购买》获取下载地址即可!

四.猜你喜欢

- AVAsset 加载媒体

- AVAssetTrack 获取视频 音频信息

- AVMetadataItem 获取媒体属性元数据

- AVAssetImageGenerator 截图

- AVAssetImageGenerator 获取多帧图片

- AVAssetExportSession 裁剪/转码

- AVPlayer 播放视频

- AVPlayerItem 管理资源对象

- AVPlayerLayer 显示视频

- AVQueuePlayer 播放多个媒体文件

- AVComposition AVMutableComposition 将多个媒体合并

- AVVideoComposition AVMutableVideoComposition 管理所有视频轨道

- AVCompositionTrack AVMutableCompositionTrack 添加移除缩放媒体音视频轨道信息

- AVAssetTrackSegment 不可变轨道片段

- AVCompositionTrackSegment 可变轨道片段

- AVVideoCompositionInstruction AVMutableVideoCompositionInstruction 操作指令

- AVMutableVideoCompositionLayerInstruction 视频轨道操作指令

- AVFoundation – 将多个媒体合并(二) – 一个轨道多个视频无缝衔接

- AVFoundation – 将多个媒体合并(三) – 多个轨道,每个轨道对应一个单独的音频或者视频

- AVFoundation – 将多个媒体合并(四) – 不同分辨率媒体合成并自定义分辨率

- AVFoundation – 将多个媒体合并(五) – 多个视频之间设置转场溶解过渡效果

ChatGPT 3.5 国内中文镜像站免费使用啦

![模拟真人鼠标轨迹算法(支持C++/Python/易语言)[鼠标轨迹API简介]-猿说编程](https://winsdk.cn/wp-content/uploads/2024/11/image-3.png)

暂无评论内容